EVIDE vs Execution Certification

Visual summary · v2.0 — full analysis below

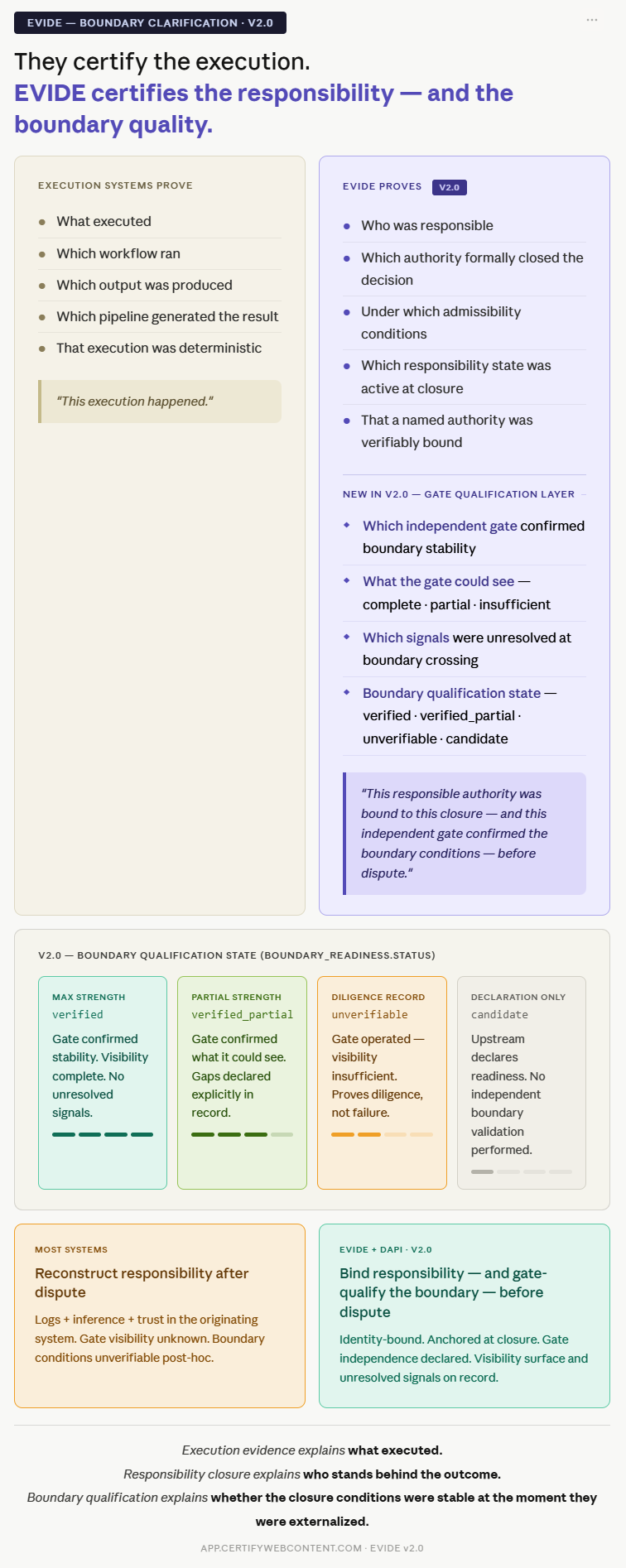

A growing class of systems focuses on proving that an AI pipeline ran correctly: deterministic execution logs, reproducible outputs, runtime audit trails, behavioral proofs. These are valuable and necessary. They answer the question:

EVIDE answers a different question:

"Who was responsible for the decision that resulted from that execution — and was that responsibility formally closed, bound, and independently verifiable before any dispute arose?"

These are not competing answers. They address different evidentiary layers. Confusing them leads to governance gaps that become visible only under audit, litigation, or regulatory investigation.

Execution certification systems are designed to make the machine behavior provable. They focus on:

| Execution Systems Prove | EVIDE Proves |

|---|---|

| What executed | Who was responsible |

| Which workflow ran | Which authority closed the decision |

| Which output was produced | Under which admissibility conditions it was accepted |

| Which pipeline generated the result | Which human responsibility state existed at closure |

| That a model was invoked | That a named authority was verifiably bound to the outcome |

| That the execution was deterministic | That the classification context was explicit and anchored |

| That the output matched a specification | That the threshold state was declared and recorded |

| Gate visibility: usually not part of the evidentiary object | Which independent gate assessed boundary stability |

| Boundary qualification: usually not represented | Boundary qualification state — verified · verified_partial · unverifiable · candidate |

They cannot say: "this responsible human authority was verifiably bound to this closure state — and the gate's visibility limits were recorded as part of the evidentiary object — before dispute."

EVIDE operates at the responsibility closure boundary — the moment a decision formally becomes the declared responsibility of an identified, authority-bound human actor. EVIDE anchors:

- the identity of the authority who declared responsibility

- the intervention type and rationale at the moment of closure

- the classification state and its operational stability

- the upstream governance structure that was active

- the threshold that was declared met, not met, or not defined

- the admissibility conditions that existed at the closure point

- the human oversight level that was declared

- the authority attribution status of the threshold owner

- which independent gate assessed boundary stability at closure

- the gate's observational coverage — declared, partial, or insufficient

- which signals remained unresolved at the moment of boundary crossing

EVIDE proves the responsibility structure that existed when the decision was formally closed."

This is the evidentiary object that matters when a decision is challenged externally — in audit, in court, in regulatory review. Not the execution log. The responsibility record.

EVIDE is frequently misread as a governance dashboard, an audit log, or an AI monitoring tool. It is none of these.

EVIDE exists at the responsibility closure boundary. It creates independently verifiable evidentiary objects that bind:

The most critical architectural distinction between execution certification and EVIDE is temporal — and it defines liability exposure.

When a decision is challenged, the system must reconstruct who was responsible: searching logs, cross-referencing audit trails, inferring authority chains. This reconstruction is always contestable — it depends on the integrity of the very system that is under scrutiny.

EVIDE anchors the responsibility structure at the exact moment of closure, under a verified identity. When the decision is challenged — days, months, or years later — the record already exists. No reconstruction is needed. No inference is required.

EVIDE + DAPI bind responsibility before dispute."

This is not a technical feature. It is an evidentiary position. And in the context of the AI Act, it is the difference between being able to demonstrate compliance and being forced to reconstruct it.

EVIDE can anchor a closure state. But anchoring a closure state to a declared name is not the same as anchoring it to a verified identity. That distinction is the difference between a declaration and an attributable commitment.

DAPI (Digital Attestation of Personal Identity) is the identity verification layer that makes EVIDE's responsibility binding materially attributable. It provides the verified identity reference that EVIDE stores inside the authority object at closure:

"authority": {

"id": "user_87421",

"role": "HR Reviewer",

"verification": "DAPI-XXXX" ← verified identity reference

}

Without the DAPI verification field, the authority.id field remains a system-internal identifier — traceable within the originating system, but not independently attributable outside it. With DAPI, the closure object carries a verified identity reference that can be independently confirmed without access to the originating system.

This is why EVIDE + DAPI establishes a materially different accountability structure from execution-certification or evidence-only systems:

- → Responsibility establishment — DAPI provides the verified identity reference associated with the declared authority

- → Responsibility binding — EVIDE anchors the closure state to that verified identity

- → Pre-dispute attribution — the binding exists before any challenge arises

- → Independent verifiability — the record survives outside the originating system

The EU AI Act does not focus only on whether AI systems execute correctly. It requires that human oversight be demonstrable — meaning that the responsibility structure around high-impact AI decisions can be produced on demand, independently of the originating system.

- Article 9 requires risk management documentation that is independently verifiable

- Article 12 requires logging and traceability at the decision level, not only the model level

- Article 14 requires that human oversight be structurally embedded and documentable

- Article 17 requires quality management and clear accountability chains

This is the gap EVIDE was designed to address. Not by replacing execution evidence — but by introducing the responsibility closure layer that execution evidence alone cannot provide.

- Execution certification — proves the machine ran correctly

- EVIDE — proves the responsibility structure that existed when the decision closed

Responsibility closure explains who stands behind the outcome.

Boundary qualification explains whether the closure conditions were stable at the moment they were externalized.